理解大语言模型:架构与应用

Introduction

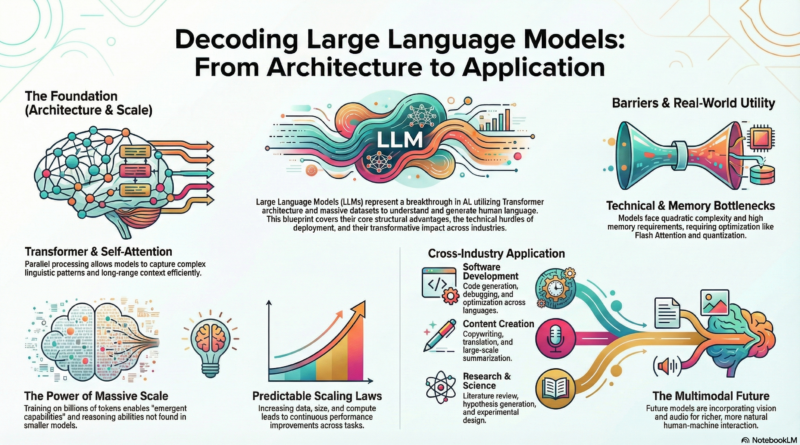

Large Language Models (LLMs) have revolutionized the field of artificial intelligence, transforming how we interact with machines and process information. These sophisticated neural networks, trained on vast amounts of text data, have demonstrated remarkable capabilities in understanding, generating, and manipulating human language. From GPT-4 to Claude and beyond, LLMs have become foundational tools across industries, reshaping software development, content creation, and research methodologies.

Transformer Architecture

At the core of modern LLMs lies the Transformer architecture, introduced in 2017. Unlike traditional sequential models, Transformers use a self-attention mechanism that allows them to process entire sequences simultaneously, understanding contextual relationships between distant words. Key components include multi-head attention layers, feed-forward neural networks, and positional encodings. The ability to process information in parallel enables LLMs to handle longer contexts and capture complex linguistic patterns that previous architectures struggled with, making them significantly more efficient and powerful.

Training Data and Scale

The breakthrough capabilities of LLMs emerge from training on massive datasets comprising billions of text tokens from books, scientific papers, websites, and other diverse sources. This exposure enables models to learn statistical patterns, world knowledge, and reasoning abilities across numerous domains. Scale matters dramatically—larger models consistently demonstrate emergent capabilities not present in smaller versions. Recent research shows that performance follows predictable scaling laws, where increasing model size, training data, and compute resources leads to continuous improvements across tasks, though with diminishing returns on investment.

Technical Challenges

Training and deploying LLMs presents significant technical challenges. Memory requirements for storing model parameters and intermediate activations necessitate specialized hardware and optimization techniques. Attention mechanisms have quadratic complexity, processing time and memory that grows with sequence length squared. Techniques like Flash Attention and sparse attention patterns help mitigate these issues. Inference latency remains a concern for real-time applications, prompting research into model compression, quantization, and efficient serving architectures. Additionally, ensuring consistency and reducing hallucinations requires ongoing research into alignment techniques and retrieval-augmented generation.

Practical Applications

LLMs have permeated numerous application domains. In software development, they serve as code assistants, helping developers write, debug, and optimize programs across multiple languages. Content creators leverage these models for copywriting editing, translation, and summarization at unprecedented scale. Customer support systems use LLMs to understand queries and provide contextual responses, reducing human workload. Researchers employ them for literature review, hypothesis generation, and experimental design acceleration. The versatility of these models continues to expand as developers discover novel applications in healthcare, finance, education, and creative industries.

Future Directions

The future of LLMs promises continued innovation and capability expansion. Multimodal models incorporating vision, audio, and other modalities are emerging, enabling richer understanding and more natural interaction. Techniques for reducing hallucinations and improving factual accuracy through retrieval systems and verification mechanisms are maturing. Efficiency improvements in training and inference make powerful models more accessible. The field is also exploring ways to make LLMs more accessible through open-source initiatives and optimized deployment strategies. As these technologies advance, they’ll likely become fundamental infrastructure, transforming how we interact with information and automate cognitive work.

Conclusion

Large Language Models represent one of the most significant advances in AI capabilities, demonstrating remarkable improvements in language understanding and generation that have practical applications across numerous domains. Understanding their architecture, capabilities, and limitations is crucial for developers and researchers working with these powerful tools. While challenges remain in areas like accuracy, efficiency, and safety, the rapid pace of innovation suggests that LLMs will continue to evolve, becoming even more capable and integrated into our daily workflows. Staying informed about developments in this space is essential for anyone working in technology today.